Before you read any further, be sure to go and read Kendra Little’s fantastic post on How to Survive Opening A Microsoft Support Ticket for SQL Server or Azure SQL. There are details I won’t go into in this post, but I just wanted to note that the issues Kendra raises are not ones that only she experiences.

The Basics

Availability Groups (AGs) are a great way to handle business continuity. When they work, they work great. When they don’t work…well the documentation and tooling are rather lacking to help you get through.

One of the things with AGs is that you can have servers on different subnets. This is useful if you want to have AGs span across multiple data centers or want to perform cage migrations, or, in the case of Azure VMs, you want to have an AG that can automatically failover and isn’t at the mercy of a timeout of an Azure load balancer.

Failing over an AG to a different subnet works well, but it does require configuring your Windows Server Failover Cluster (WSFC) resource so that all of the IPs associate with the AG listener are registered (known as RegisterAllProvidersIp which is now the default). If they aren’t and you failover to a different subnet, you’re then at the mercy of your DNS TTL for the amount of time it will take for clients to connect to SQL on that new subnet (or you connect to each machine and flush its DNS cache).

With RegisterAllProvidersIp enabled, every IP address associated with the AG listener is presented and the client connection string includes MultiSubnetFailover=True (with supported client libraries) then the client will test all the presented addresses and then connect to the one that responds.

Adding a new IP to a listener for an AG is done using an ALTER AVAILABILITY GROUP MODIFY LISTENER command. This will normally update DNS appropriately but there are manual steps you can take to ensure it done. Why an extra step? Because if the IP is not registered and you failover to the new subnet, you end up with the TTL problem I mentioned earlier. A way around this is to create a new A record in DNS that points to the new IP. That way, when you failover, that IP is already in DNS and you don’t run into issues. You can create the A record either through the DNS console or through PowerShell.

The Issue

A requirement came in for a new server in a new subnet in an existing AG. A simple process and one that’s been done dozens of times in the past with a well worked SOP. In this instance, the new server was added to the WSFC, logins all added, databases restored, the instance added to the AG, and the listener modified to include it.

After getting the DNS team to manually add the new A record, a nslookup confirmed the IP appeared in the DNS record.

Great stuff! Ready to move into service then.

Except, prior to adding traffic to the new instance, the nslookup ran again and somehow the new IP had vanished from the A record. The DNS team stated they hadn’t removed it. Logs showed that none of the DBAs had executed anything to remove the IP, and yet it had vanished.

The DNS team added it back once more. Everyone validated it.

The next day it was gone once more.

The DNS admin did some looking and it showed that the AG listener computer account deleted it. Odd. So, I went digging through the SQL Server logs. Nothing there. Then I dumped the cluster logs and went digging through those.

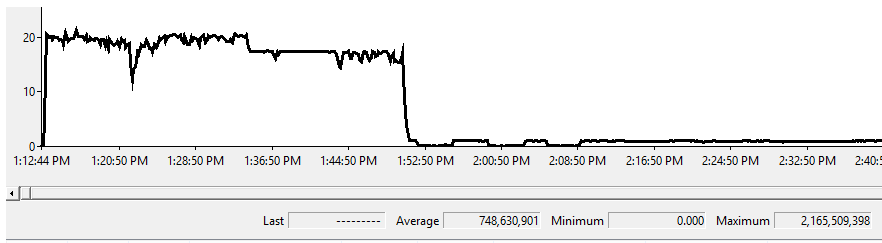

There were some entries in the logs indicating that the WSFC was checking the IPs were valid, but only showed the IPs that already existed, and not the new one added. Looking back, this process ran every 24 hours.

Unfamiliar with this, it was time to open a ticket.

The Investigation

After explaining the issue multiple times and collecting many set of logs and and answering the same question a large number of times, we received a couple of “things to try” that included turning off RegisterAllProvidersIp (which would have caused an outage in a failover to a box on a different subnet) and to remove permissions from DNS for the AG listener (which would mean we couldn’t add new IPs using TSQL or PowerShell).

After several false starts over weeks, recreating the A records over and over again (I truly feel bad for the DNS admin who just kept his PowerShell script to hand and just hit enter once a day), we got to someone who moved past beyond reading some random web pages and gave us the first piece of useful information.

The Fix

The short version, is that after adding an IP to an AG listener, you have to restart the AG network name for it to actually pick up the change. You can do this by either failing over the AG to any other replica or offline/online the network name resource using the Cluster Manager GUI or PowerShell.

When a new IP is added to the AG listener, it’s added to the static config, but that config is not read in until the computer name is restarted. In this case, we hadn’t performed a failover or restarted that resource and so the WSFC used the cached record to validate the IPs with DNS. When it noticed that DNS had an extra IP that wasn’t in the cached configuration, it removed it.

After adding the IP once more, and performing an after-hours restart of the computer network name, the new A record remained through the next DNS check in the WSFC. After leaving it a couple more days to be sure that it wasn’t going to vanish again, the new server was added fully into service.

I asked for a link to documentation on this facet of AGs and WSFCs. Apparently there is none. So, this is just a warning note for those of you maybe adding IPs in extra subnets – restart your resources to ensure your change is picked up.