One day you’re happily working away and get interrupted by an alert that says your transaction logs are getting full or maybe growing. Ever the vigilant person you logon, crank open SSMS or ADS and take a look at sys.databases to check out the log_reuse_wait_desc.

AVAILABILITY_REPLICA the DMV proudly advises. Availability Groups – the lifesaver of your organization and the bane of your nightmares.

You crack open a SQL script and run it against the primary to see which replica is running behind. Strangely all the replicas appear to be up to date. The send queue is hovering around 60. The redo queue is not greater than a thousand on any of the asynchronous replicas.

Unsure why this is happening you use the tried and trusted method of restarting the hadr endpoint to see if that kicks things into motion. Nothing is resolved. The log is geting fuller and fuller and you really don’t want that thing to grow.

What do you do next?

You may go around to the secondary replicas and remove them from the AG, one at a time, to see if they fix the problem. Alternatively, you go ahead and check the running sessions on each of the replicas to see if there’s something going on you haven’t considered.

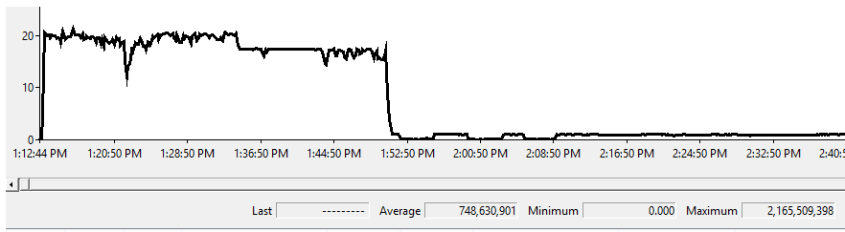

Your query discovers that the AG replica in your DR site is still taking a backup from overnight. It should have completed hours ago, but for some reason it is still running. Wondering if it is related you kill the backup.

With the backup dead, suddenly the transaction log on your primary clears and you can get back to your interrupted nap hard work.

This is an example of a choice in messaging within SQL Server that makes it more difficult to find a solution.

In this case the problem of the transaction log not truncating would have ideally been marked as ACTIVE_BACKUP_OR_RESTORE, after all, it was a backup that was blocking the log truncation. But the folks that wrote the product felt AVAILABILITY_REPLICA was more appropriate because the backup was running on a different replica than the primary and that was the root location for the truncation issue.

So, be aware, messaging may not always accurately reflect problems that are occurring.