For quite some time I had been seeing a situation where database restores were slowing down after 50-60% of the restore was completed (you can output restore progress using WITH STATS = <some value 1-100> or use DMVs or sp_whoisactive). This was not really a big deal with small databases, it would just take an extra couple of minutes to complete. But when I had the need to restore a 16 TB database it became a real problem.

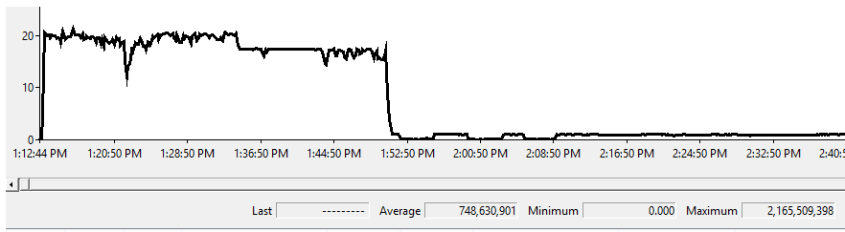

The restore would kick off at a decent rate giving me around 2 GB/sec (restoring over a WAN from multiple files to a server with 144 cores, 512 GB of RAM, and multiple data files on NVME storage). This was acceptable. Then, a little over halfway through the restore performance dropped to 200 MB/sec and then 100 MB/sec (this being viewable through perfmon)

This issue I could reproduce time and again. Something was up with the product.

In investigating I kicked off another restore and watched the process. Things started swimmingly reading from the 4 backup files on the NAS and processing fast. Then the restore seemed to complete reading 3 of those files and slow down dramatically reading the fourth one. At the same time I could see a single core on the database server being pegged at 100% usage.

There wasn’t anything I could do to resolve this issue (short of using a ridiculous number of backup files in an attempt to make the low perf period as short as possible).

Given that this had the potential for a real RTO/RPO problem it was time to call in the big guns over at Microsoft support.

We ran through a few more scenarios including taking stack dumps during the period when the restore was low (DBCC STACKDUMP <- NEVER EVER DO THIS ON A PROD SYSTEM).

Working through the dumps the MS support engineer found bug from last year whereby disks formatted with a 4K sector size could experience slow restore performance.

I checked up on my servers to confirm that we were using 4K using the built in FSUTIL command. In this case FSUTIL FSINFO NTFSINFO <drive>. This provides a wealth of useful information about the disk, and showed that it was indeed using a 4K sector size.

Fortunately this issue was fixed in October last year with

- SQL Server 2014 Service Pack 3

- SQL Server 2014 Service Pack 2 – Cumulative Update 12

- SQL Server 2016 Service Pack 2 – Cumulative Update 1

- SQL Server 2016 Service Pack 1 – Cumulative Update 9

- SQL Server 2017 – Cumulative Update 7

The sad thing for me was that the particular instance I was restoring to was SQL Server 2012, for which no patch was released (as that version is out of mainstream support). But I was able to confirm that the slowdown did not happen when attempting to restore against an appropriately patched version of a fully supported version of SQL Server (time to put on the harder press for convincing management to allow us to upgrade).

So if you are seeing problems with restores getting slow and are on SQL Server 2014/2016/2017 get yourself patched up to date. If you are stuck on an older version either redo the low level formatting on your storage (not recommended), take uncompressed backups (mildly recommended), or work to get yourself on a fully supported version of the product.